In a world where technology increasingly defines our interactions, using AI for flirting is no longer a futuristic concept —...

How AI Works with Diagrams: From Input to Output

Here’s the shortest way to get oriented: AI is a pipeline. Data goes in, an AI model gets trained, and then the AI model produces a result you can test. If any part is weak, the whole AI system feels “random.” AI isn’t magic; it’s engineering plus statistics.

Below are five diagrams. They’re not decorative. Use them to explain an AI feature to a teammate, or to debug why an AI tool is failing.

Table of Content

ToggleStep 0: Artificial intelligence, machine learning, and a quick taxonomy

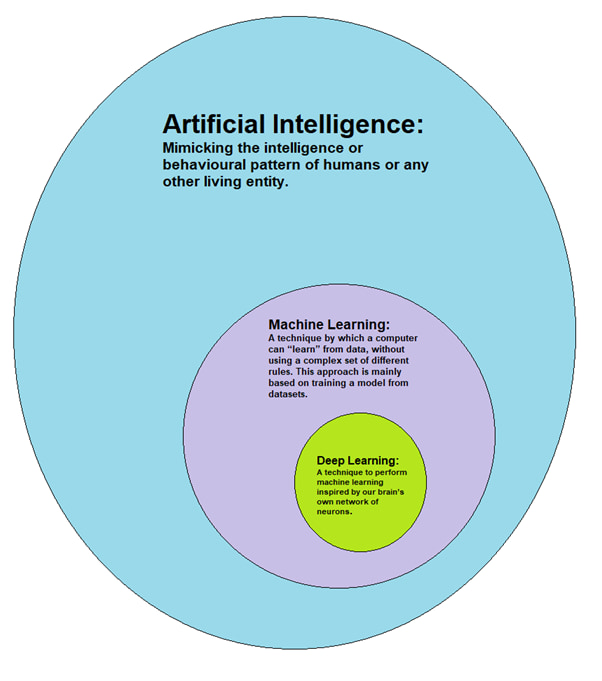

Artificial intelligence is the umbrella term. Machine learning sits under AI. Deep learning is a machine learning approach, often implemented as a neural network. That’s the discipline of AI: build, measure, iterate. It’s also the subset of AI most products rely on.

A simple map:

- Artificial intelligence (broad)

- Machine learning is a subset (learns from examples)

- Many-layer neural networks

- Generative AI / GenAI (can generate new content)

Diagram 1 — the core pipeline (the following diagram is the whole story)

data sets → training → model → inference → output

| |

algorithm request + input

Step 1: Data sets (where bias and blind spots start)

AI learns patterns from examples. Given enough volume, it can learn patterns you didn’t intend. It will also recognize patterns that are accidental, unfair, or just wrong if your data sets are skewed. That’s where bias usually begins: not in the model code, but in what you collected, filtered, and labeled.

What you’re trying to capture are patterns in data that generalize beyond a single screenshot or a single week of logs.

A basic data science checklist that keeps teams honest:

- define what “good” looks like (metrics)

- sample across real users and edge cases

- log failures and retrain intentionally

Start with data using clear rules (what you include, what you exclude, and why). If you skip this, you get an AI model that looks good in a demo and breaks in production.

One myth that keeps coming up: these models are not trained on the entire internet. They are trained on large amounts of mixed sources, filtered for quality, licensing, and safety—trained on massive corpora, not “everything.”

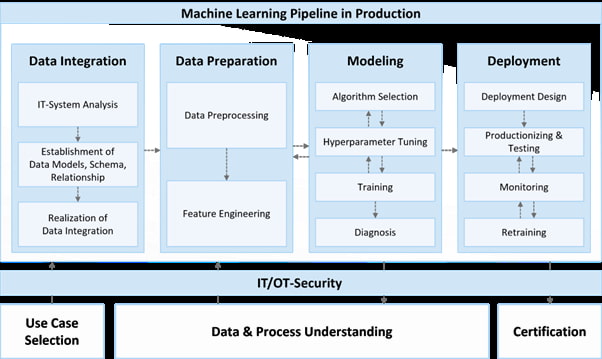

Diagram 2 — the data pipeline in practice

raw data → cleaning → sampling → split → training data

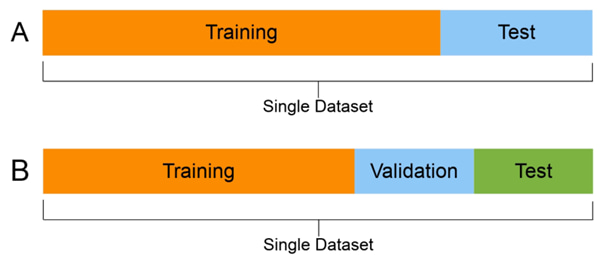

Step 2: Learning loops (one type of machine learning at a time)

The feedback loop changes the behavior. Keep this table in your head, and you’ll stop mixing AI terms.

| Type | What the model sees | What it optimizes | Typical use |

|---|---|---|---|

| supervised learning | labeled examples | error vs target | fraud flags, ranking |

| reinforcement | reward signal | reward over time | control policies |

This is the “learn from data” idea in one line: the model updates parameters so future predictions improve.

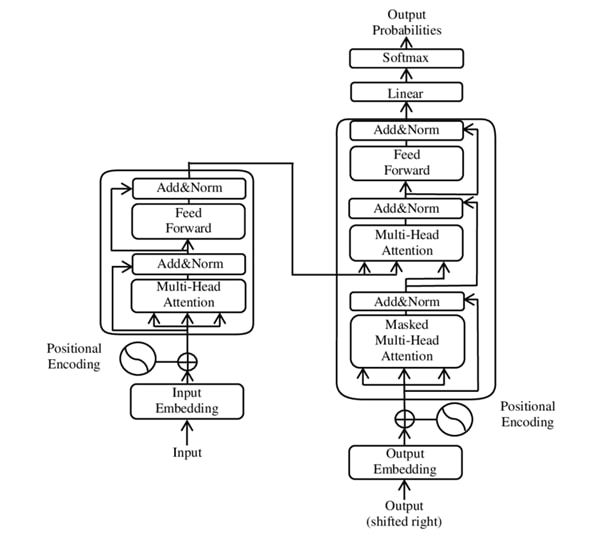

Step 3: Neural network basics and why attention changes scale

A neural network is a stack of layers made of units. Each unit is a node. You can picture a neuron as a small computational function: it takes numbers, transforms them, and passes them forward.

Deep learning stacks many layers so the model can represent complex patterns. The classic review paper summarizes the point like this: “Deep learning allows… multiple processing layers to learn representations… with multiple levels of abstraction.” Source: LeCun, Bengio, Hinton (2015), Nature review (PDF).

Modern attention models build hierarchical features over sequences. In natural language processing (NLP), attention helps the model understand the relationships between words, not just what’s adjacent.

Diagram 3 — attention sketch (why “next word” works)

tokens → vector → attention → context → next word

Large language models are attention-based models trained for text.

You’ll see “large language models” written out, and you’ll also see LLMs in product docs. The singular ‘LLM’ is used when people talk about a single model instance.

When the model is handling human language, it’s using token-level statistics, not a human-style world model. That’s why the same request can produce different results.

Step 4: Inference (where users feel the system)

At inference time, you send a prompt, it gets tokenized, the AI model scores candidate tokens, and a response is produced. This is the part most people interact with.

Diagram 4 — one request (like ChatGPT)

prompt → tokenize → model → probabilities → response

If you want a non-mystical mental model of how models work: they predict likely continuations based on training. And yes, that is how many production chat features actually work.

Also: AI relies on hardware and budgets. Serving is about latency and cost, and it depends on a processor doing fast matrix math. That’s similar to how our homework help AI works here at Edubrain.

Step 5: AI systems, AI agents, and “do the task” behavior

An “AI system” is the product wrapper: safety checks, monitoring, storage, and UI. An AI agent is a pattern where the model can call AI tools, keep state, and pursue specific goals.

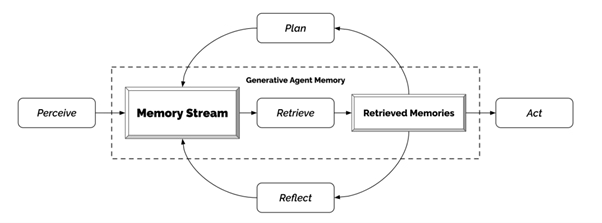

Diagram 5 — an AI agent loop inside computer systems

goal → plan → call tools via api → observe → update → make decisions → act

|

diagnostics + human feedback

This is how you get workflows like speech recognition + ticket creation, or autonomous vehicles coordinating with sensors. It’s powerful, but it multiplies failure modes: a bad plan can quickly trigger a bad tool call.

Stats you can cite (and a small graph)

Stanford’s AI Index reports that in 2023, 51 notable machine learning models were produced by industry, versus 15 by academia. Source: Stanford HAI AI Index 2024 (Chapter 1).

It also gives concrete training compute examples that show the scale jump:

- AlexNet: ~470 petaFLOPs

- Transformer (2017): ~7,400 petaFLOPs

- Gemini Ultra: ~50,000,000,000 petaFLOPs

Table: model production and scale (AI Index 2024)

| Item | Value |

|---|---|

| Notable models (industry, 2023) | 51 |

| Notable models (academia, 2023) | 15 |

| Transformer training (petaFLOPs) | 7,400 |

Mini bar chart (models, 2023):

- Industry: ██████████████████████████████ 51

- Academia: ████████ 15

Where GenAI fits (foundation models in the AI landscape)

Foundation models are reused across the surrounding AI ecosystem: chat, code, search, and media. Some examples people recognize: Llama and Stable Diffusion.

If you’re building on a model through a service interface, the big questions are practical:

- What datasets shaped it?

- What are the limits for your domain?

- How do you evaluate and monitor drift?

This is where “AI technology” stops being abstract and becomes product work.

Quick checks before you trust an AI response

- Is the answer based on their training, or on something you can verify?

- Can you generate a counterexample to expose bias?

- Does the system have a knowledge base, or is it purely generative?

- If it’s conversational, can you reproduce the same response twice under the same conditions?

Explore Similar Topics

It is now clear that artificial intelligence is an integral part of our lives. It is transforming many industries, including...

Artificial Intelligence is now the centre of technological evolution. From healthcare to the automotive industry to finance and education, AI...